In this post, I apply the “tool” metaphor to two common concerns with respect to online services and privacy: profanity in discussion fora and the publication of “creepshots” - public photos (and associated comments) of women taken without the consent of the subject. These two examples help demonstrate the point that thinking of online services as “tools” better generates innovative responses to social concerns, including those where privacy and free expression interests collide. “Tools” call for solutions that change how the service works, while thinking of services as “spaces” calls for solutions applicable to spaces: weeding, policing, monitoring, borders, and the like.

Here is an example of a solution that changes how a service works. Kendra Albert recently posted a study of game developer efforts to curb the use of abuse/trashtalk/player harassment in online game play. There is of course a time and place for offensive speech, and as a citizen and as an occasionally irritated person, I value the well-timed use of profanity. But, there are good reasons, including engagement by children, that many service providers and users alike would prefer to curtail it.

This is a task my former Microsoft colleague Stephen Tolouse worked on every day. I name-drop him in case he wants to comment on my description, but in general terms the techniques used by Xbox Live consisted of responding to user complaints, escalated sanctions for misbehavior, and published guidelines. In other words, the Xbox Live “space” was governed by speed limit signs and traffic police.

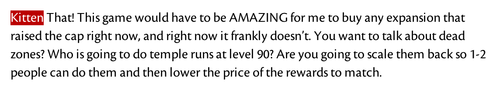

Kendra Albert’s blog describes an innovative approach to this problem: using the tools of code rather than the policing activity appropriate for a networked space. As reproduced on her blog, here is an example of what it looks like when certain swear words are entered:

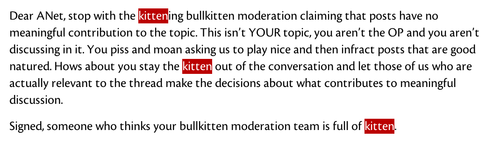

Or when angry terms raise the tension level:

Essentially, a simple algorithm to replace known swear words with “kitten” addresses the concerns with profanity while reducing (or eliminating) the need for staff to monitor chat fora or online comments, and at the same time nudging the mood in a more positive direction.

The New York Times’s “Bright Ideas” column for October 21st, 2012, presents another dilemma: what rules to set about content published online, given both privacy and free expression concerns? In this case, “creepshots”: photos of women covertly photographed on the street, posted for others to ogle and comment on. The column likens the situation to the challenges of cities: how to achieve a kind of organized chaos, mixing different kinds of people and ideas, inevitably with some collisions.

But why think of online services as cities? Do these services have governments, accountable to their citizens, to set time, place and manner rules for public speech? Most commentators say the answer is, “not really,” (see, e.g., Rebecca MacKinnon, “Consent of the Networked),” and even if you believe, as I do, that market forces do create some accountability, the capability to make fine-tuned judgments at scale is not common to all online services (and tough to build and operate in any case).

Thinking of online services as “tools” invites ideas that ask "what is the purpose" of this tool, and that ask how the tool can be better designed to address both user interests and service provider goals. From there, uses of the tool that further its purpose can be distinguished from uses that do not.

What purpose is served by publishing creepshots? Not many socially beneficial purposes that I can identify, but that’s not the argument for publishing them. Indeed the strongest (and possibly) only argument for publishing them is a negative argument: restricting a user’s entitlement to publication would be worse than allowing it. Restrictions on free expression could easily slip over into bans on more valuable speech, would be arbitrary and/or difficult to enforce.

Those concerns are real, and it would be important to tailor restrictions carefully. But the arguments against publishing "creepshots" identify positive purposes: change the tone of online discourse, promote greater respect for women, and respect privacy in context (one can accept being visible to people on the street around you while also reasonably expecting not to be visible to millions of people online). Framing the argument in terms of purposes pushes the debate closer to the conclusion that service providers could be perfectly justified by restricting "creepshots" from publication, or at least restricting certain language in the comments on such photos. Which they can do in a tailored way, of course, by substituting photos of kittens…